Vectors and Geometry in Space

In Lesson 1AM we developed the algebra of vectors in the plane, and in Lesson 1PM we used that algebra to recover geometric facts about lines, distances, and loci. We now extend this framework to three-dimensional space. While the geometric complexity increases, the algebraic methods developed for the plane generalise naturally. In this chapter, we construct the vector space and explore the fundamental interplay between algebraic equations and solid geometry.

Cartesian Coordinates in Space

To define the position of a point in space algebraically, we require a reference system consisting of three mutually perpendicular lines intersecting at a common point, which we designate as the origin . We assign a direction to each line, establishing them as the -axis, the -axis, and the -axis.

Orientation

Unlike the plane, where the relative orientation of axes is generally fixed by a counter-clockwise convention from to , three-dimensional space offers a choice of orientation. There are possible assignments of axes to three mutually orthogonal lines. These assignments partition into two distinct classes.

- Right-Hand Systems: Assignments that can be continuously rotated into one another.

- Left-Hand Systems: Assignments that are mirror images of the first group and cannot be reached via rigid rotation.

A coordinate system is said to be right-handed if the , , and axes correspond respectively to the thumb, index finger, and middle finger of the right hand when extended in mutually perpendicular directions.

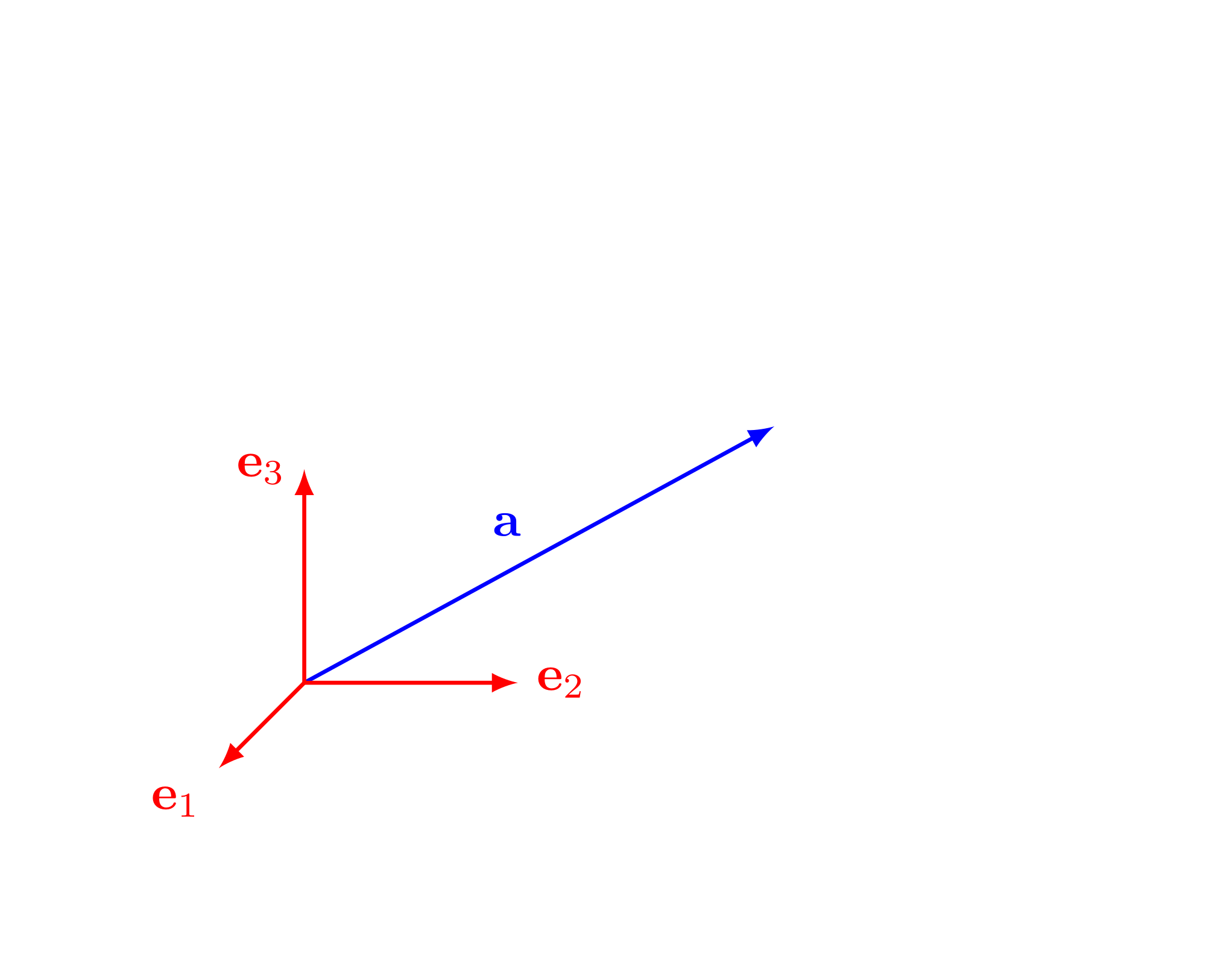

We shall exclusively adopt the right-hand coordinate system. This choice ensures consistency with standard conventions in physics and vector calculus, particularly regarding the cross product (to be discussed in subsequent sections). The following figure fixes the orientation we shall use throughout the chapter.

The Bijection between Points and Triples

We identify each coordinate axis with the real line . Given an ordered triple of real numbers , we associate it with a unique point in space via the intersection of three planes:

- The plane perpendicular to the -axis at .

- The plane perpendicular to the -axis at .

- The plane perpendicular to the -axis at .

Conversely, for any point in space, we construct planes passing through perpendicular to the axes, intersecting them at coordinates respectively. This establishes a one-to-one correspondence between solid geometry and algebra.

The ordered triple of real numbers associated with a point are called the coordinates of . We write . The set of all such ordered triples is denoted by .

As in Lesson 1AM, the same list of real numbers may appear either as a point or as a vector. The point is a location in space, whereas the vector is a displacement. The algebra is the same component-wise; the geometric role is not.

Fix the origin at a survey marker on the ground. Let the positive -axis point due east, the positive -axis due north, and the positive -axis vertically upward. If a light fixed to a crane is metres east of the marker, metres south of it, and metres above the ground, then its position is recorded by the triple

If the same light is moved to a point metres west of the marker while retaining the same north-south and vertical displacements, then its coordinates become

Thus the signs of the coordinates record on which side of each coordinate plane the point lies, and the ordered triple specifies the position completely.

Consider a cube of side length resting in the first octant (where all coordinates are positive). One vertex is positioned at the origin , and the adjacent edges lie along the positive coordinate axes. The vertex diametrically opposite to the origin is found by translating units along the -axis, units along the -axis, and units along the -axis. Thus, its coordinates are .

The Euclidean Metric in Space

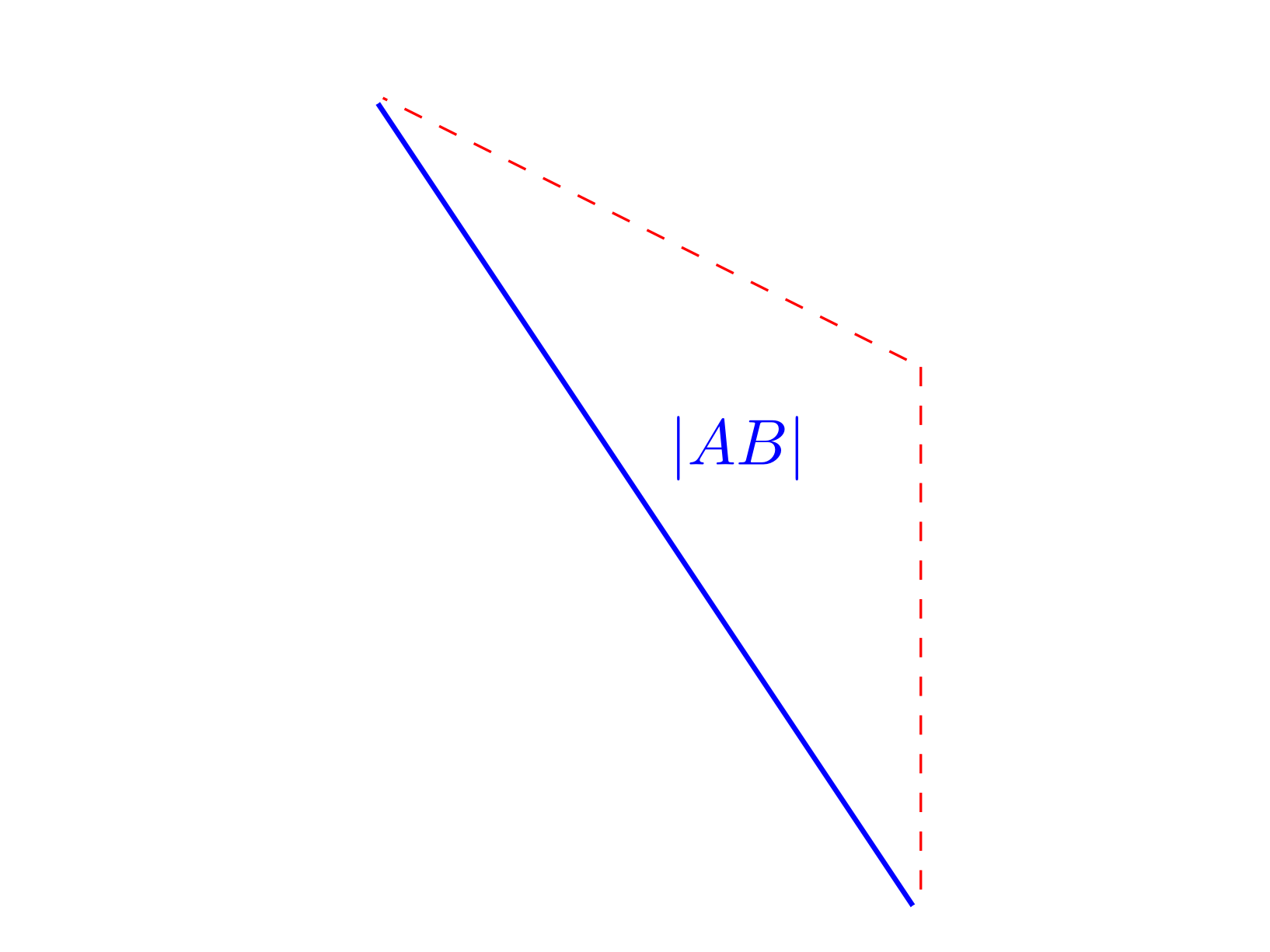

The metric properties of space are derived directly from the coordinate definitions by iteratively applying Pythagoras’ theorem. Just as distance in requires a single application of the theorem, distance in requires two.

Let and be points in space. The Euclidean distance between and , denoted , is given by

Let and . Consider the auxiliary point .

The points and both lie in the horizontal plane . Within this plane, the distance between them is determined by the planar distance formula:

The points and share the same and coordinates, meaning the segment is parallel to the -axis. The length of this vertical segment is precisely .

Because the segment is orthogonal to the plane , it is orthogonal to any line within that plane, including the segment . Therefore, the triangle is right-angled at . Applying Pythagoras’ theorem to this triangle yields:

Taking the positive square root completes the proof.

■The next figure isolates the planar diagonal and the vertical edge used in the proof.

If , the distance formula becomes

This is the direct spatial analogue of the magnitude formula from Lesson 1AM.

Find the exact distance between the points and .

Applying the distance formula:

The points are exactly units apart.

The Equation of a Sphere

The distance formula allows us to characterise geometric loci in space algebraically. The most fundamental of these is the sphere, perfectly mirroring the definition of a circle in the plane.

A sphere is the locus of all points in space at a fixed distance (the radius) from a fixed point (the centre).

Let the centre be and let be an arbitrary point on the sphere. By definition, the distance is . Squaring both sides and applying the distance formula, we obtain the standard equation of a sphere:

If an equation is given in an expanded polynomial form, we recover the geometric properties of the sphere by completing the square for each variable independently.

Determine the set of points in satisfying the equation:

We collect the terms for each variable and complete the square:

Adding the necessary constants to both sides:

Factoring the perfect squares gives:

This represents a sphere centred at with a radius of .

The following figure shows the centre and one radius for this sphere.

Consider the sphere

Its centre is the origin and its radius is . To test whether a point lies on the sphere, we substitute its coordinates into the equation.

For , we obtain

so the point lies on the sphere.

For , we obtain

so this point does not lie on the sphere.

Problem 17

A sphere passes through the origin and has its centre at the point . Determine the equation of the sphere and find the coordinates of the point diametrically opposite to the origin.

Problem 18

Determine whether the point lies on the sphere centred at with radius .

Vectors in Space

The passage from the Cartesian plane to space replaces coordinate pairs with ordered triples. Algebraically, very little changes. Geometrically, however, we now work with directed segments in three dimensions rather than in the plane.

A vector in space is an ordered triple of real numbers, written as a column. The individual numbers are called the components of the vector. The set of all such vectors is denoted by . Thus, a vector is given by

As in Lesson 1AM, we keep the distinction between points and vectors strict. The ordered triple specifies the point , whereas the column denotes a vector. The same three real numbers appear in both places, but their meanings are not interchangeable.

Geometrically, the vector is represented by the directed segment from the origin to the point . In that situation, is the position vector of . This is exactly the same idea as in the plane, only now a third component records vertical displacement as well.

Three vectors play a distinguished role. They record unit motion along the three coordinate axes:

The zero vector is

and corresponds to the degenerate directed segment at the origin.

Every vector in space is built from these three basis vectors in the evident way:

So the components of are precisely the coefficients of , , and in this decomposition.

Let

Then

This says that to reach the endpoint of from the origin, we move units in the direction, unit in the opposite direction, and units in the direction.

The length of the directed segment representing is its magnitude. Since the distance from the origin to was computed above, the definition is immediate.

The magnitude (or norm) of a vector is the non-negative real number

Exactly as in the planar case, the zero vector is the unique vector with zero magnitude. Thus if and only if .

Let

Then

The Vector Space

Points in space are geometric locations. They are not added or scaled as points. Vectors, however, are algebraic objects, and the operations from Lesson 1AM extend component-wise without any change in principle.

Let

and let .

- The vector sum is

- The scalar multiple is

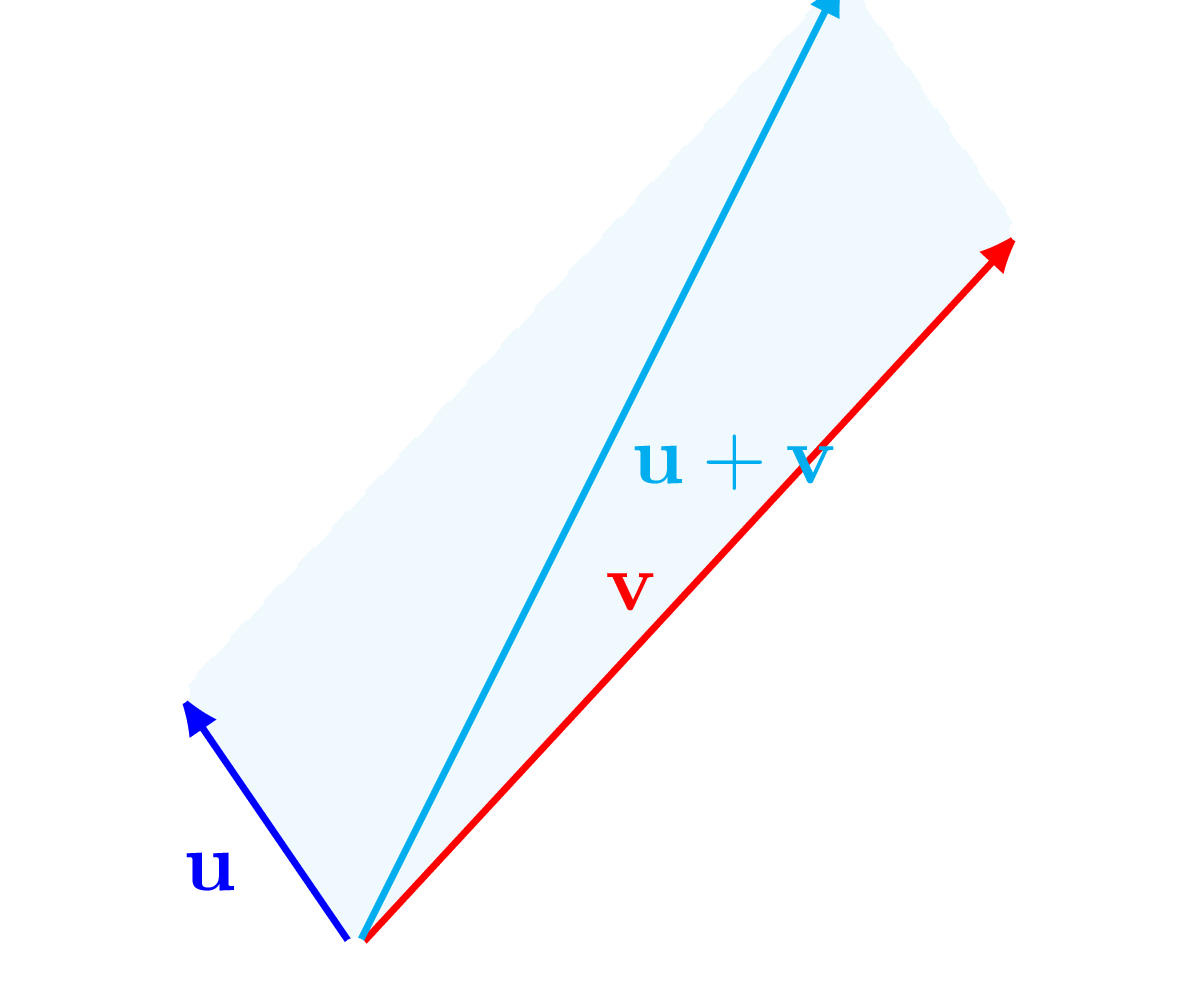

Geometrically, is still the diagonal of the parallelogram generated by and . The only new feature is that this parallelogram now lies in a plane sitting inside rather than in the ambient space itself.

For example, let

Then

The following figure shows the plane determined by , , and the origin. As in the planar case, the diagonal of the corresponding parallelogram is the sum vector.

Let

Then

The set of all ordered triples, equipped with these two operations, is the vector space . Its algebraic laws are the same as those of .

Let be vectors in and let be scalars. Then:

- There exists a unique vector such that .

- For every , there exists a unique vector such that .

These identities are checked component-wise, using only the algebraic laws of the real numbers. For example,

All other properties are proved in exactly the same way.

■Any theorem proved purely from these vector space axioms carries over from to without alteration. A basic instance is the zero product law.

Let and let . Then if and only if or .

The proof is identical to the axiomatic proof from Lesson 1AM. Only the vector space laws are used, and those laws are the same in as in .

■Problem 19

Let

Determine the values of and such that . Are and linearly dependent?

Linear Combinations and Independence

The concept of linear combinations, introduced in Lesson 1AM, extends naturally to . This generalisation forms the foundation for understanding dimension and basis in higher-dimensional vector spaces. What changes is not the algebra but the geometry recovered from it: one vector generates a line through the origin, two suitably placed vectors generate a plane through the origin, and three suitably placed vectors may generate the whole space.

Let be vectors in and let be scalars. The vector defined by

is called a linear combination of the vectors .

If , then the vectors form the line through the origin in the direction of . If and are not scalar multiples of one another, then the vectors form the plane through the origin spanned by and .

Take

Then

So the linear combinations of and are precisely the vectors lying in the plane .

Linear Independence of Two Vectors

The definition of linear independence for two vectors in space is the same as in the planar case.

Two vectors are linearly independent if neither is a scalar multiple of the other. If one is a scalar multiple of the other, they are linearly dependent.

We establish four equivalent conditions for linear dependence in space. Observe the expansion of the algebraic determinant condition compared to its planar counterpart.

Let and . The following statements are equivalent:

- and are linearly dependent.

- The points , , and are collinear.

- There exist scalars and , not both zero, such that .

- .

The equivalence of statements 1, 2, and 3 is proved exactly as in the plane. We therefore only check the equivalence of 1 and 4.

Assume statement 1 holds. Without loss of generality, let . Then for . Substituting into the first expression of 4:

By symmetry, the other two expressions also vanish.

Conversely, assume statement 4 holds. If , dependence is immediate. If , choose an index for which . If , let . Then

give

Hence . The cases and are handled in the same way. Thus statement 4 implies statement 1.

■Condition 4 requires that all three determinants formed by the coordinate pairs of and must vanish simultaneously. This algebraic requirement directly anticipates the cross product; specifically, two vectors in space are linearly dependent if and only if their cross product is the zero vector.

By negating the previous theorem, we obtain the corresponding criterion for independence.

Let . The following statements are equivalent:

- and are linearly independent.

- The points are distinct and not collinear.

- The equation implies and .

- At least one of the expressions , , or is non-zero.

Each statement here is the logical negation of the corresponding statement in the preceding dependence theorem.

■Linear Independence of Three Vectors

The third dimension naturally permits the simultaneous consideration of three vectors.

Three vectors are linearly dependent if at least one of them can be expressed as a linear combination of the other two. They are linearly independent if none is a linear combination of the others.

The standard basis vectors constitute a primary example of three linearly independent vectors. The algebraic criterion for their dependence relies on finding a non-trivial linear combination that yields the zero vector.

Three vectors are linearly dependent if and only if there exist scalars , not all zero, such that

Suppose the vectors are dependent. Then one vector, say , is a linear combination of the others: . This rearranges to . Since the coefficient of is , a combination exists where the scalars are not all zero.

Conversely, suppose with at least one non-zero scalar. Without loss of generality, assume . We divide by and solve for :

Thus is a linear combination of and , proving dependence.

■Just as the linear dependence of two vectors implies collinearity, the linear dependence of three vectors implies coplanarity.

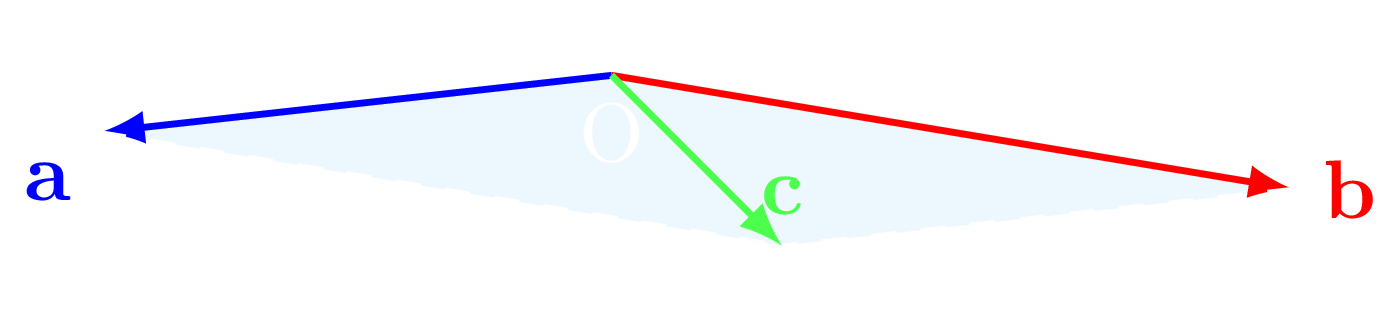

The vectors are linearly dependent if and only if the points are coplanar (lie in the same geometric plane).

Suppose are linearly dependent. Then one is a linear combination of the other two, say

If and are linearly dependent, then , , and are collinear, and lies on the same line. Hence all four points are collinear, and therefore coplanar. If and are linearly independent, then the vectors of the form are exactly the points of the plane through spanned by and . Since has this form, the point lies in that plane. Thus , , , and are coplanar.

Conversely, assume , , , and are coplanar. If , , and are collinear, then and are already linearly dependent, so the set is dependent. If , , and are not collinear, then , , and determine a unique plane through the origin. Every point in that plane has position vector of the form . Since lies in this plane, its position vector satisfies

for suitable scalars and . Geometrically, this may be seen by drawing through one line parallel to and another parallel to . These meet the lines generated by and at points and respectively, and then

Since lies on the line , we have ; since lies on the line , we have . Hence is a linear combination of and , so are linearly dependent.

■The figure below shows the coplanar situation .

We now negate the dependence criteria exactly as before.

Let . The following statements are equivalent:

- are linearly independent.

- The points are distinct and not coplanar (so they form a tetrahedron).

- The equation implies , , and .

This theorem is obtained by negating the algebraic and geometric criteria proved above.

■For three vectors there is also a component criterion, analogous to the minor conditions for two vectors. Namely, , , and are linearly dependent if and only if the determinant formed from their components is zero:

While this is a necessary and sufficient condition, the rigorous development of determinant theory for systems is reserved for the Linear Algebra notes. For the present chapter, the geometric and algebraic criteria established above are sufficient.

General Linear Independence and Dimension

We conclude our investigation of linear independence by formalising the relationship between a set of vectors and its subsets. These results, while simple, are used repeatedly and apply structurally to vector spaces of any dimension.

Let be three vectors in . If any one of them is the zero vector, or if any pair of them is linearly dependent, then the entire set is linearly dependent.

If , then

and the coefficients are not all zero. So the three vectors are linearly dependent. If a pair, say and , is linearly dependent, there exist scalars and , not both zero, such that

Then

again with coefficients not all zero. Hence the three vectors are linearly dependent.

■Conversely, the property of linear independence is strictly inherited by subsets.

Let be a linearly independent set of vectors. Then they are distinct non-zero vectors, and any pair chosen from them is linearly independent.

This is the contrapositive of the preceding theorem. If one vector were zero, or if some pair were dependent, then the full set would be dependent. Therefore an independent triple must consist of distinct non-zero vectors, and every pair drawn from it must be independent.

■The definition of dependence itself extends to any finite set.

Let be a natural number. A set of vectors is said to be linearly dependent if at least one of them can be written as a linear combination of the remaining vectors. If no such combination exists, they are linearly independent.

In there is a strict upper bound on how many independent vectors can exist. The next theorem identifies that bound.

Any four vectors in are linearly dependent.

We seek scalars , not all zero, satisfying:

Substituting the explicit components , , etc., the vector equation translates into a system of three simultaneous linear equations:

This is a system of three linear equations in four unknowns, and every equation has right-hand side zero. The algebraic fact recorded in the following remark states that such a system must have a solution other than

Thus there exist scalars , not all zero, satisfying the system, and therefore the four vectors are linearly dependent.

■The proof uses the following fact: if one has fewer linear equations than unknowns, and every right-hand side is zero, then the system cannot force all unknowns to be zero. In the present case there are four unknowns but only three equations, so at least one parameter remains undetermined. Choosing that parameter to be non-zero produces a solution for which not all of vanish.

We leave the proof there, where it properly belongs: in the later discussion of Gaussian elimination, row reduction, homogeneous systems, pivots, free variables, and rank. In that setting we will prove the general statement that a homogeneous linear system with more unknowns than equations must admit a non-trivial solution.

It follows at once that any set of five or more vectors in is also linearly dependent, since such a set contains a subset of four vectors.

This observation reveals a fundamental characteristic of the vector space. We have already seen that , , and are linearly independent, but the theorem above shows that no fourth vector can be added to an independent set in . The number is therefore the exact maximum.

The dimension of a vector space is the maximum number of linearly independent vectors that can simultaneously exist within it.

- The vector space has dimension .

- The vector space has dimension .

This gives an exact algebraic meaning to the phrase three-dimensional space and aligns with the geometric intuition developed throughout the chapter.